Ai-deep-dives

-

AI Deep Dive Nov 4: Using Large Language AI Models to Support Language Instruction

Multilingual large language models have demonstrated strong performance in natural language processing tasks. In this discussion, we explore how these transformer-based models might be used to support language instruction. In the current case study, we’ll examine possible uses of these models to support instruction in Hindi. Might these models be… Read MoreOct. 20, 2022

-

AI Deep Dive: Exploring the Narrative Arc

When reading a longer document, such as a novel, your interpretation of the text you are currently reading is informed by what’s come before. Your attention to particular details, wording, or ideas; whether you perceive an action in a positive or negative light; what you think about a particular character. Read MoreOct. 7, 2022

-

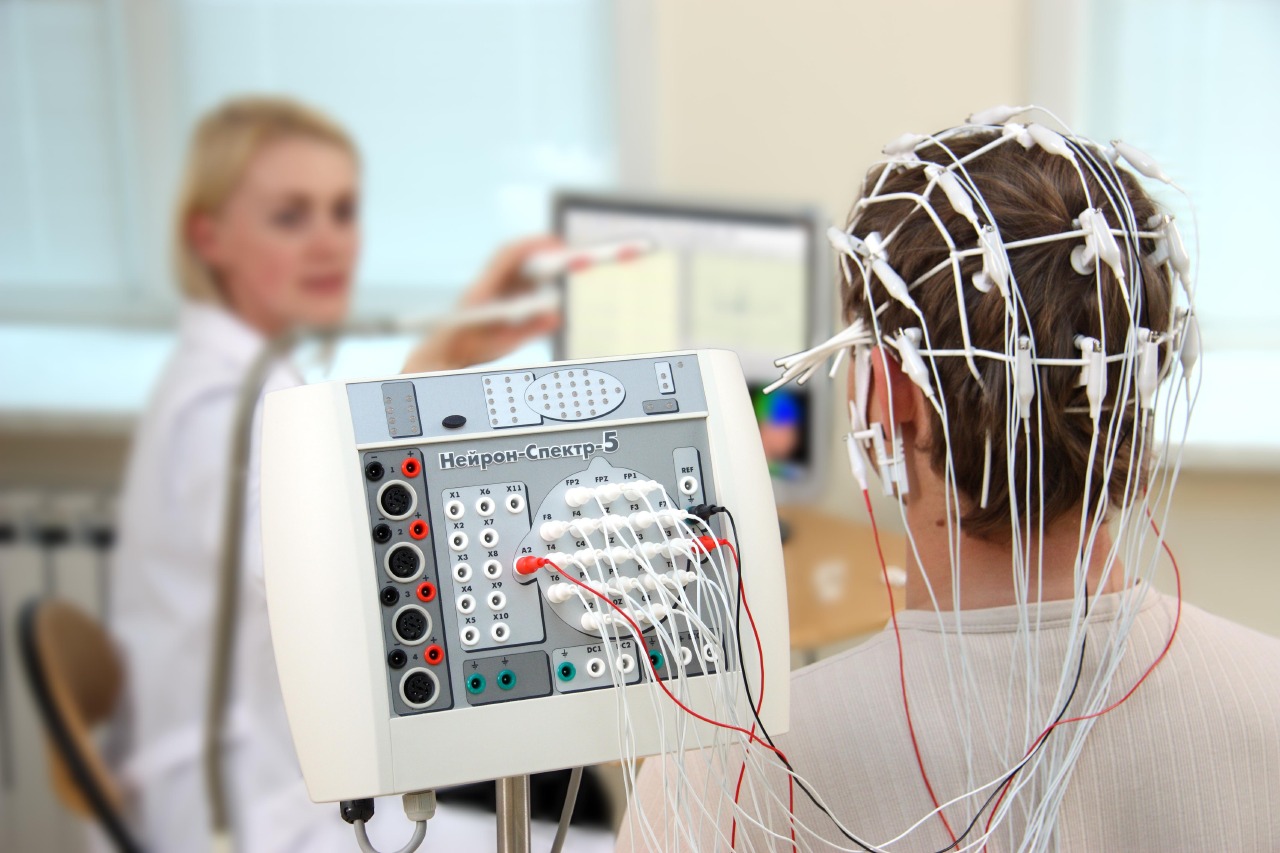

AI Deep Dive: Training a Transformer Model on EEGs

Friday, September 30 at 1:00pm the Data Science Institute will host Prof. Sasha Key in a discussion of applying transformer deep learning models to the problem of analyzing multichannel EEG in response to multiple stimulus/recording conditions (e.g., faces vs. objects, speech vs. nonspeech, attend vs. ignore, etc.). Transformers are powerful… Read MoreSep. 29, 2022

-

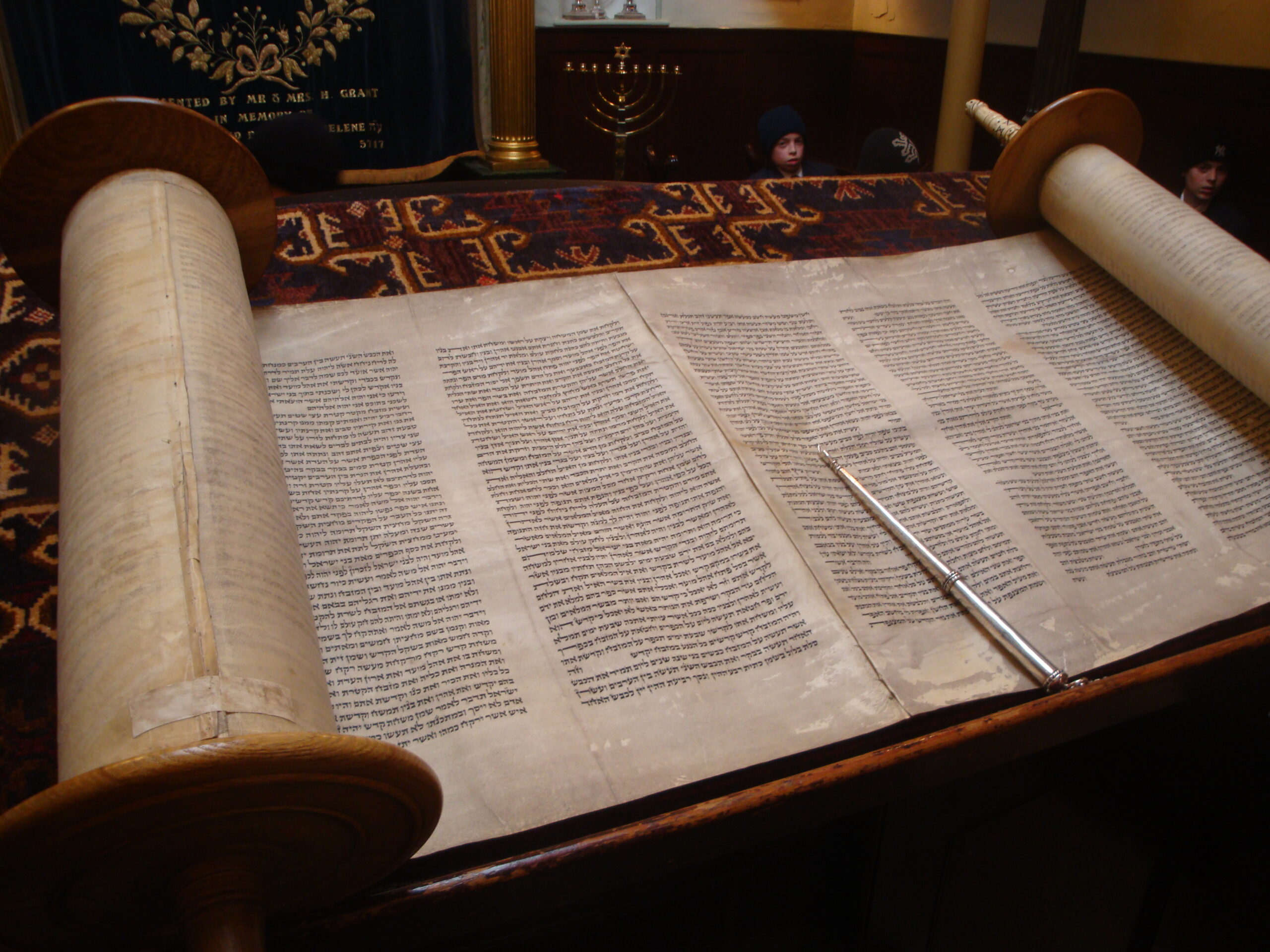

Understanding “Authorship” of the Torah (05/06/22)

About Dr. Phil Lieberman, Jewish Studies Research Context 1 The Torah was originally transcribed in Medieval times, where medieval scholars transcribed the consonants. This could produce ambiguity; consider the New York Times – if you read the words “sh rd ths”… Read MoreApr. 5, 2022

-

Multimodal Neuroimaging Data (04/15/22)

About The goal of this project is to employ deep learning on paired EEG-MRI data in order to make MRI predictions based on EEG alone. The project currently has ~40 subjects with paired MRI-EEG data (collected separately but with the same task design), which will grow… Read MoreApr. 5, 2022

-

Student Teacher Interaction Analytics (04/01/22)

About The Education and Brain Science Research Lab is beginning the Student Teacher Interaction Analytic (STiA) project to determine the relationship between executive function language used by teachers during reading instruction and reading outcomes. Executive function (EF) is a set of cognitive controls that support us in planning,… Read MoreApr. 5, 2022